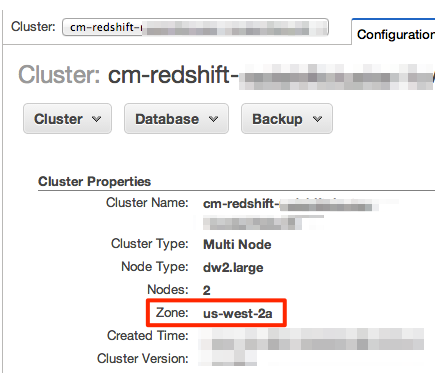

Let’s see for example the structure of the events that Mixpanel has, which is a typical case of a service that tracks user events. But the overall structure of the points in the time series will be flat in most cases. Of course, more data can be added, like custom attributes with which we’d like to enrich the time series, to perform some deeper analysis. The simple triplet above, taken as a time series, contains enough information to help us understand the behavior of our user. If for example, we consider user events, the minimum required information that we need is the following The second characteristic of time series data is that its structure is usually quite simple. Amazon Redshift stores the minimum and maximum values of each of its data blocks in metadata. Having table rows sorted improves the performance of queries with range-bound filters. According to the Kimball dimensional modeling methodology, there are four key steps in designing a dimensional model: Identify the business process. Sort keys determine how rows are physically sorted in a table. So, it should be easy to discard older data, something that is also related to the cost of running our infrastructure. The following tables show examples of the data for ticket sales and venues. Second, older data might not be that relevant in the future, or we should not use it in any case as it might skew our analytic results. First of all, we need somehow to guarantee that we will have predictable query times regardless of the point in time which we want to analyze our data. When we work with time series data, we are expecting to have an ever-growing table on our data warehouse.įrom a data warehouse maintenance perspective, this is important. Primarily, updating a row rarely happens. Time Series data are unique for a number of reasons. We will see what options we have to optimize our data model and what tools Redshift has in its arsenal for optimizing the data and achieve faster query times. In this post, we will see how we can work efficiently with time series data, using Redshift as a data warehouse and data that is coming from events triggered by the interaction of users with our product. Working with data ordered in time has some unique challenges that we should take into consideration when we design our data warehouse solution. The same is also true if we consider the interactions of a recipient to one of our MailChimp campaigns. The interaction of a user with our product is a sequence of events where time is important. For this, one approach could be Redshift’s Unload command to unload data into S3 and then use Snowflake’s Copy command to load this data from S3 into Snowflake tables. Especially when we start working with user generated events.

To get started, log into your Redshift database using psql.

We’ll use a table called orders, which is contained in the repsales schema. Distkeys and Sortkeys are Redshift-only column designations that can help speed up query performance.

> redshift_tool.Time series data, a sequence of data points that are time ordered, often arise in analytics. Step 1: Retrieve the table's schema Note: The process outlined here can be used across the board to apply encodings and Keys. > upsertkey=('upsertkey1','upsertkey2')Ĥ.Examples Append or Copy data without primarykey, sortkey, distributionkey Eg. upsertkey:- During the upsert method of data loading, we need to pass upsert key by which key old record will get updated & new will be added.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed